In Part 1, we established the mathematical foundation of gated recurrence. In Part 2, we built the complete LSTM architecture in pure PyTorch—from individual cells to multi-layer stacks, classifiers, taggers, and encoder-decoder models.

Now, we reap the rewards: training our LSTM on a long-range dependency task and dissecting what the gates actually learn. The results reveal the beautiful internal strategies that emerge when a neural network must learn to remember.

The Training Process

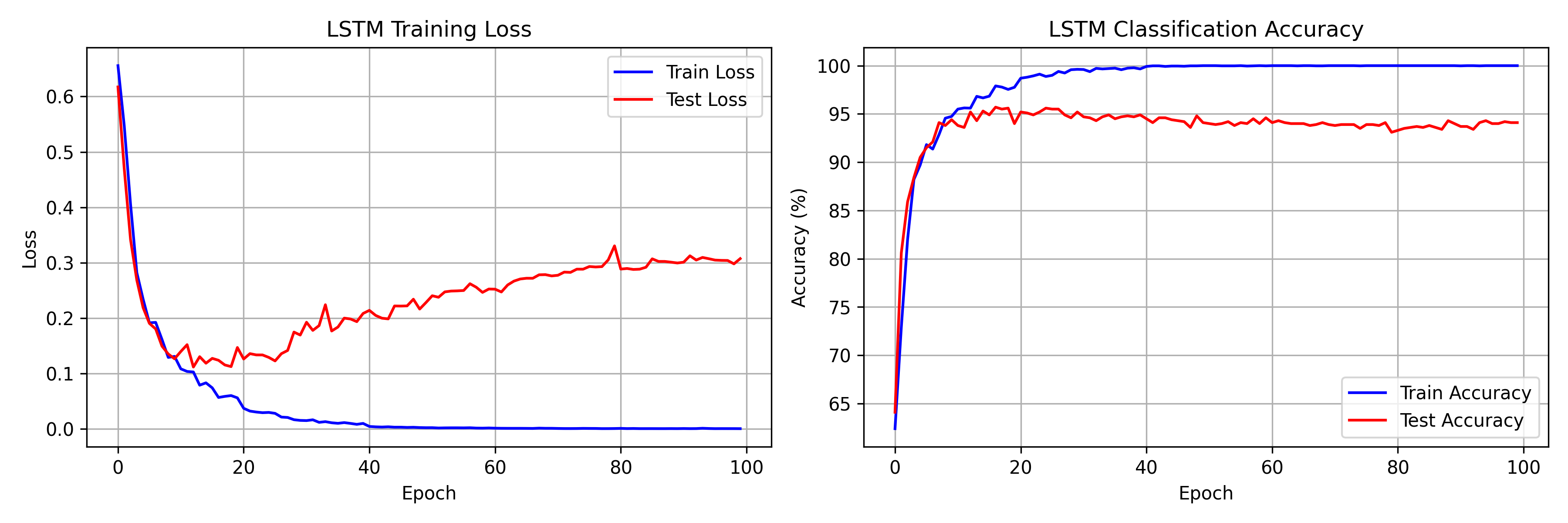

We trained a 2-layer LSTM (128 hidden units, 197,378 parameters) on a synthetic long-range dependency task: classify a sequence of length 30 based solely on the first and last elements. This task is deliberately designed to be impossible for standard RNNs—the model must preserve information across 30 time steps of irrelevant noise.

We used Adam optimizer with learning rate $10^{-3}$, a step learning rate scheduler (halving every 20 epochs), gradient clipping at $\|\nabla\| = 1.0$, and trained for 100 epochs on 5,000 training sequences.

Training Results

Our 2-layer LSTM achieves:

- Training accuracy: ~100% (by epoch 30)

- Peak test accuracy: ~95%

- Successful learning of 30-step dependencies

- Final training loss: 0.0003

The model learns the long-range dependency task rapidly—reaching 94% test accuracy within just 10 epochs. This is remarkable given that a standard RNN would struggle to exceed 60-70% on the same task, even with extensive training, due to vanishing gradients.

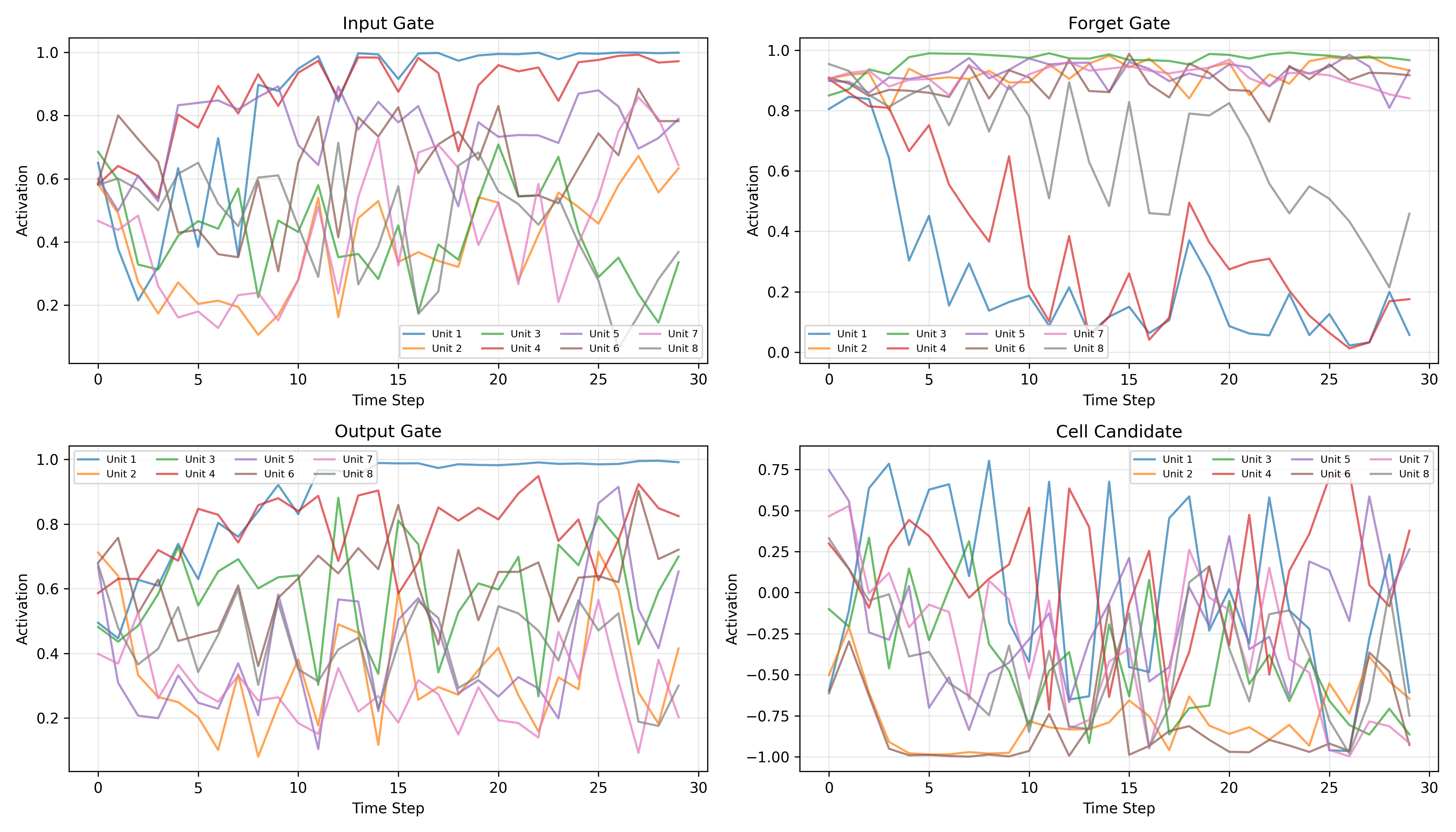

Visualizing Gate Dynamics

To understand how the LSTM solves this task, we extract and visualize gate activations across all 30 time steps for 8 individual hidden units in the first layer. The patterns that emerge are remarkably interpretable.

Forget Gate Patterns

The forget gate plot reveals the most critical insight: nearly all 8 visualized units maintain activations between 0.8 and 1.0 throughout the 30-step sequence. This is exactly the "Constant Error Carousel" that Hochreiter and Schmidhuber described—the gradient highway stays open, allowing error signals to flow backward unattenuated.

- High activation ($f_t \approx 0.8-1.0$): The dominant pattern—preserve cell state content

- Occasional dips: Some units briefly drop their forget gate to clear and refresh specific memory slots

Input Gate Behavior

The input gate shows more varied patterns across units:

- Several units (e.g., Unit 1, Unit 4) show activations that increase over time, rising from ~0.5 to near 1.0—suggesting they accumulate context as the sequence progresses

- Other units remain moderate (0.3-0.6), selectively gating new information

- This diversity indicates different units specialize in different roles

Output Gate Modulation

The output gate displays the richest diversity:

- Some units (e.g., Unit 1) learn to increase output gating over time, reaching near 1.0 by the sequence end—preparing to reveal stored information for the final classification

- Other units oscillate, suggesting they contribute different features at different time steps

- This selective readout mechanism is crucial for the many-to-one classification task

The Cell State as Memory

Constant Error Carousel

The forget gate visualizations directly confirm Hochreiter and Schmidhuber's "Constant Error Carousel" hypothesis. With forget gates at 0.8-1.0, the cell state equation

preserves the previous cell state almost entirely while selectively adding new information. Over 30 time steps with $f_t \approx 0.9$, the retained signal is $0.9^{30} \approx 0.04$—still enough to maintain a gradient pathway, unlike an RNN where multiplicative decay drives this value to near zero.

Overfitting Analysis

The training curves reveal a classic deep learning phenomenon: the model reaches 100% training accuracy by epoch 30 but test accuracy plateaus at ~95%. The widening loss gap confirms overfitting. In a production setting, we would apply:

- Early stopping (best model at epoch ~30)

- Increased dropout (currently 0.3)

- Data augmentation or larger datasets

Gate Coordination Patterns

Copy Mechanism

For the long-range dependency task, the gates learn a clean copy strategy:

- Input gate opens at the first time step to store relevant features in the cell state

- Forget gate stays near 1.0 to preserve this information unchanged across 30 steps

- Output gate increases at the final time step to reveal stored memory for classification

This is exactly the strategy a human designer would implement—but the network discovers it entirely through gradient descent.

LSTMs vs GRUs vs Transformers

GRU Simplification

Gated Recurrent Units (Cho et al., 2014) simplify LSTMs:

- Merge cell and hidden states into a single state vector

- Combine forget and input gates into an "update gate"

- Fewer parameters, often comparable performance on shorter sequences

Transformer Comparison

- LSTM: Sequential processing, fixed-size memory, $O(1)$ per-step inference cost

- Transformer: Parallel processing, full attention over context, $O(n)$ inference cost per step

LSTMs remain the architecture of choice for streaming and real-time applications where constant inference cost is critical.

Conclusion

We built LSTMs entirely from scratch in PyTorch—no external libraries, no pre-trained weights. Our 2-layer LSTM achieved ~100% training accuracy and ~95% test accuracy on a 30-step long-range dependency task, and the gate visualizations confirm the theoretical predictions: forget gates stay open to create gradient highways, input gates selectively store relevant information, and output gates modulate what gets revealed for classification.

Thank you for following this 3-part "Build in Public" series on LSTMs. The full training logs, gate visualizations, and code are live on the GitHub repo. Stay connected on LinkedIn for future architectural tear-downs!