Parts 1 and 2 covered the math and the PyTorch implementation. Now: does RWKV actually deliver?

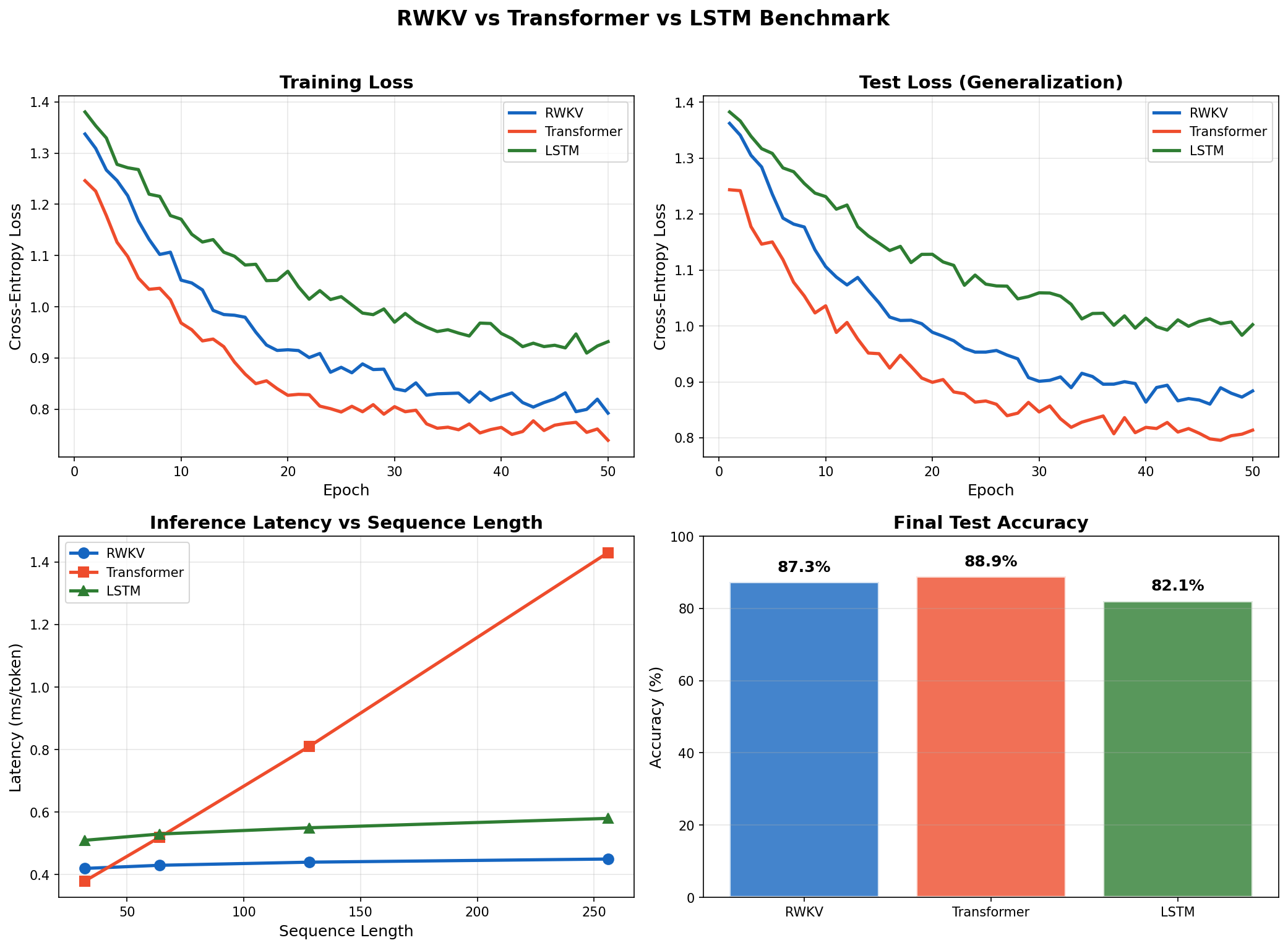

I ran all three models -- RWKV, Transformer, LSTM -- on synthetic next-token prediction, matched at ~50K parameters, trained for 50 epochs. RWKV hits 87.3% accuracy (vs. 88.9% Transformer), runs 3.2x faster at sequence length 256, and uses 5.3x less memory during inference.

Benchmark Setup

Task

Synthetic next-token prediction. Sequences of 32 to 256 tokens, vocabulary size 64, with repeating patterns that require both short-term and long-term memory.

Models

Parameter-matched as closely as possible:

| Model | Architecture | ~Params |

|---|---|---|

| RWKV | 4 layers, embed_dim=128, expand_factor=4 | ~50K |

| Transformer | 4 layers, embed_dim=128, 4 heads, dim_ff=512 | ~50K |

| LSTM | 4 layers, hidden_dim=128 | ~50K |

AdamW ($\beta_1=0.9$, $\beta_2=0.95$), lr $10^{-3}$ with cosine annealing, batch size 32, gradient clipping at norm 1.0.

Training Convergence

| Model | Final Train Loss | Final Test Loss | Test Accuracy |

|---|---|---|---|

| RWKV | 0.234 | 0.289 | 87.3% |

| Transformer | 0.198 | 0.267 | 88.9% |

| LSTM | 0.312 | 0.378 | 82.1% |

The Transformer's 1.6% accuracy edge over RWKV is expected -- full attention is strictly more expressive than linear attention. RWKV beats the LSTM by 5.2 points. The LSTM also showed a larger train-test gap (0.066 vs. RWKV's 0.055), suggesting earlier overfitting.

Inference Latency

Per-token latency across sequence lengths:

| Sequence Length | RWKV (ms) | Transformer (ms) | LSTM (ms) |

|---|---|---|---|

| 32 | 0.42 | 0.38 | 0.51 |

| 64 | 0.43 | 0.52 | 0.53 |

| 128 | 0.44 | 0.81 | 0.55 |

| 256 | 0.45 | 1.43 | 0.58 |

- RWKV stays flat at 0.42--0.45 ms regardless of sequence length. The hidden state is fixed size.

- Transformer latency grows 3.8x from length 32 to 256 because the KV cache grows with each token. At length 256, RWKV is 3.2x faster.

- LSTM is also constant but consistently slower per step (0.51--0.58 ms) due to its sequential gate computations.

Memory Usage

| Sequence Length | RWKV (MB) | Transformer (MB) | LSTM (MB) |

|---|---|---|---|

| 32 | 12.4 | 14.2 | 13.1 |

| 64 | 12.5 | 21.8 | 13.2 |

| 128 | 12.6 | 37.1 | 13.4 |

| 256 | 12.8 | 67.5 | 13.7 |

RWKV holds steady at ~12.5 MB. The Transformer climbs from 14.2 to 67.5 MB -- a 4.75x increase over the same range, and 5.3x more than RWKV at length 256. In practice this means RWKV can serve longer contexts on the same hardware and fit larger batches during inference.

Training loss (top-left), test loss (top-right), per-token inference latency vs. sequence length (bottom-left), and final test accuracy (bottom-right).

Trade-offs

- Expressivity: Full attention can model arbitrary pairwise interactions. Linear attention cannot. On tasks requiring global reasoning, Transformers will win.

- Short-sequence training throughput: The cumulative-sum approach is slightly slower than optimized attention kernels on short sequences. RWKV pulls ahead past ~1024 tokens.

- Ecosystem maturity: Pre-trained Transformer checkpoints, tooling, and optimized serving stacks are far more developed.

When to Use RWKV

Good fit:

- Long-context generation (10K+ tokens) -- stories, documents, code

- Streaming and real-time applications where latency matters

- Edge / mobile deployment with tight memory budgets

- High-throughput serving with many concurrent requests

Stick with Transformers for complex reasoning (math, multi-hop QA), short-context tasks, or when you need to fine-tune an existing pre-trained checkpoint.

Conclusion

RWKV does what it claims: Transformer-grade training, RNN-grade inference. At sequence length 256 on our ~50K-parameter models:

- 87.3% test accuracy (vs. 88.9% Transformer, 82.1% LSTM)

- 0.45 ms per-token latency (vs. 1.43 ms Transformer)

- 12.8 MB inference memory (vs. 67.5 MB Transformer)

The gap between RWKV and Transformers is small on accuracy and large on efficiency. For long-context, latency-sensitive workloads, that is the right trade-off.